robot_view_camera_params

{

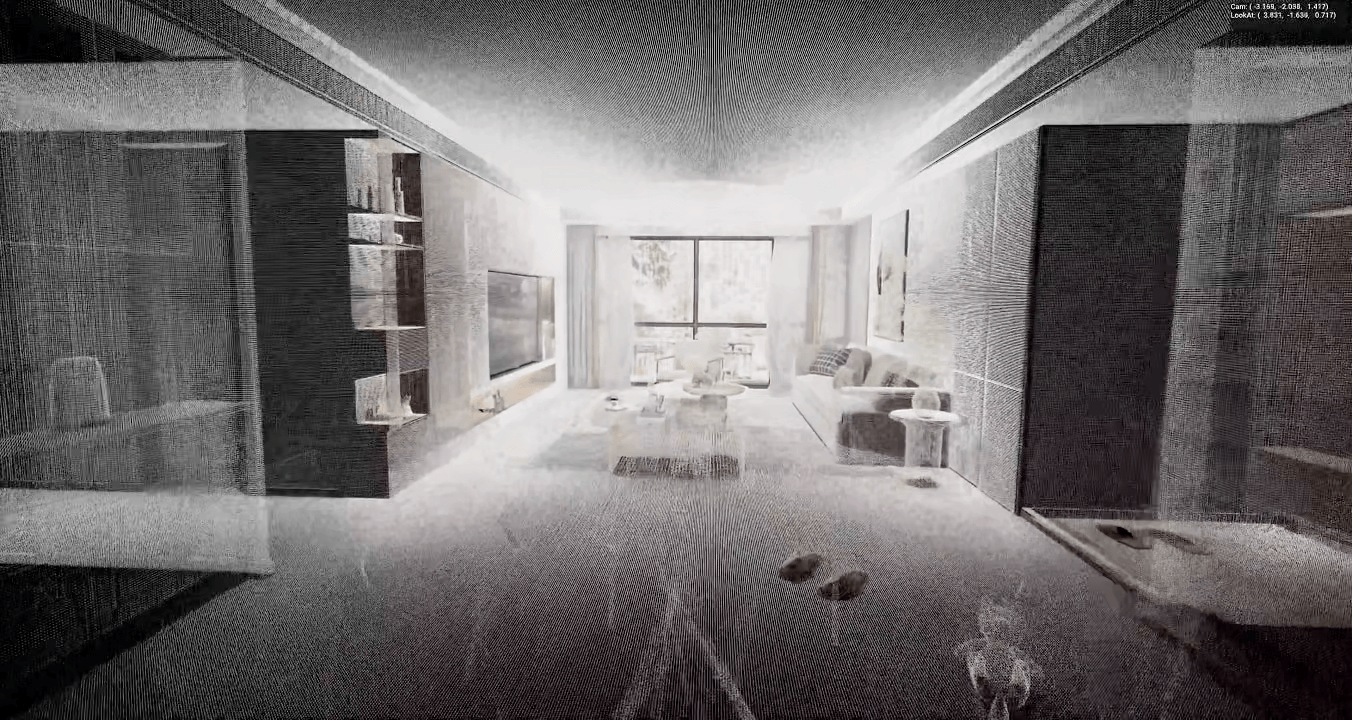

"camera_name": "Robot-View",

"timestamp_seconds": 222.375000,

"location": {

"x": -2.763041,

"y": -2.039186,

"z": 1.500000

},

"quaternion": {

"qw": 0.6509729028,

"qx": 0.3388750553,

"qy": -0.3136486709,

"qz": -0.6025134921

},

"robot view_parameters": {

"class_name": "RobotView Parameters",

"extrinsic": [

0.07720405608415604,

-0.5718645453453064,

0.816707193851471,

-0.0,

-0.9970153570175171,

-0.04428243264555931,

0.0632418692111969,

0.0,

3.725290742551124e-09,

-0.8191520571708679,

-0.5735765099525452,

-0.0,

-1.8197823762893677,

-0.4416574239730835,

3.245922565460205,

1.0

],

"intrinsic": {

"height": 2160,

"intrinsic_matrix": [

2306.217300415039,

0.0,

0.0,

0.0,

2306.217300415039,

0.0,

2088.0,

1080.0,

1.0

],

"width": 4176

},

"version_major": 1,

"version_minor": 0

},

"camera_details": {

"sensor_width_mm": 36.0,

"sensor_height_mm": 27.0,

"focal_length_mm": 19.881183624267578,

"sensor_fit": "HORIZONTAL",

"fx_pixels": 2306.217300415039,

"fy_pixels": 2306.217300415039,

"cx_pixels": 2088.0,

"cy_pixels": 1080.0

},

"notes": {

"coordinate_system": "Extrinsic matrix uses computer vision convention (Y-up, -Z forward)",

"extrinsic_format": "Column-major 4x4 matrix (world-to-camera transformation)",

"intrinsic_format": "Row-major 3x3 matrix [fx, 0, 0, 0, fy, 0, cx, cy, 1]",

"quaternion_order": "WXYZ (scalar first)",

"location_units": "Blender units (meters)"

}

}